Sometimes we need to extract information from websites. We can extract data from websites by using there available API’s. But there are websites where API’s are not available.

Jul 22, 2020 My go-to language for web scraping is Python, as it has well-integrated libraries that can generally handle all of the functionality required. And sure enough, a Selenium library exists for Python. Hi there, I’m well versed in Python with 4 years of experience working, especially in Web Scraping and Data Mining with Selenium, Google Chrome Extension. I have read your description carefully and I can bring the best More.

Here, Web scraping comes into play!

Python is widely being used in web scraping, for the ease it provides in writing the core logic. Whether you are a data scientist, developer, engineer or someone who works with large amounts of data, web scraping with Python is of great help.

Without a direct way to download the data, you are left with web scraping in Python as it can extract massive quantities of data without any hassle and within a short period of time.

In this tutorial , we shall be looking into scraping using some very powerful Python based libraries like BeautifulSoup and Selenium.

BeautifulSoup and urllib

BeautifulSoup is a Python library for pulling data out of HTML and XML files. But it does not get data directly from a webpage. So here we will use urllib library to extract webpage.

First we need to install Python web scraping BeautifulSoup4 plugin in our system using following command :

$ sudo pip install BeatifulSoup4

$ pip install lxml

OR

$ sudo apt-get install python3-bs4

$ sudo apt-get install python-lxml

So here I am going to extract homepage from a website https://www.botreetechnologies.com

from urllib.request import urlopen

from bs4 import BeautifulSoup

We import our package that we are going to use in our program. Now we will extract our webpage using following.

response = urlopen('https://www.botreetechnologies.com/case-studies')

Beautiful Soup does not get data directly from content we just extract. So we need to parse it in html/XML data.

data = BeautifulSoup(response.read(),'lxml')

Here we parsed our webpage html content into XML using lxml parser.

As you can see in our web page there are many case studies available. I just want to read all the case studies available here.

There is a title of case studies at the top and then some details related to that case. I want to extract all that information.

We can extract an element based on tag , class, id , Xpath etc.

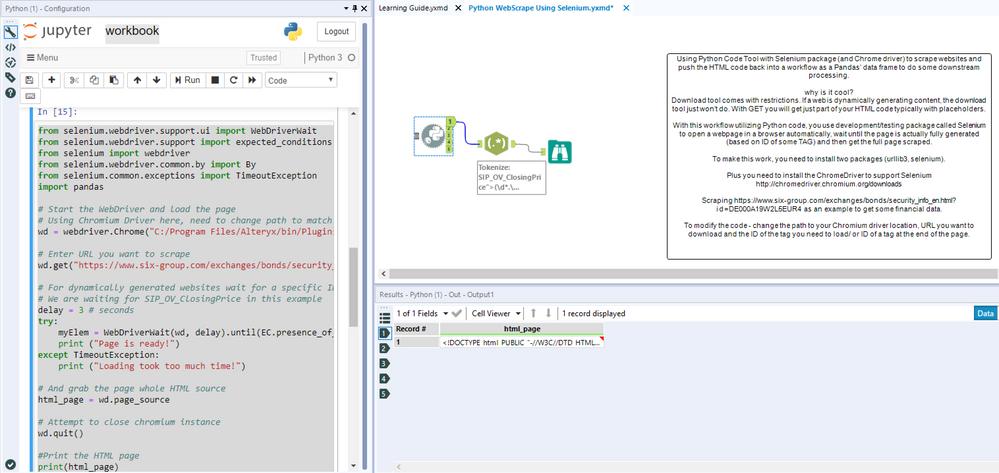

Selenium Python Web Scraping Tool

You can get class of an element by simply right click on that element and select inspect element.

case_studies = data.find('div', { 'class' : 'content-section' })

In case of multiple elements of this class in our page, it will return only first. So if you want to get all the elements having this class use findAll() method.

case_studies = data.find('div', { 'class' : 'content-section' })

Now we have div having class ‘content-section’ containing its child elements. We will get all <h2> tags to get our ‘TITLE’ and <ul> tag to get all children, the <li> elements.

case_stud.find('h2').find('a').text

case_stud_details = case_stud.find(‘ul’).findAll(‘li’)

Now we got the list of all children of ul element.

To get first element from the children list simply write:

case_stud_details[0]

We can extract all attribute of a element . i.e we can get text for this element by using:

case_stud_details[2].text

But here I want to click on the ‘TITLE’ of any case study and open details page to get all information.

Since we want to interact with the website to get the dynamic content, we need to imitate the normal user interaction. Such behaviour cannot be achieved using BeautifulSoup or urllib, hence we need a webdriver to do this.

Webdriver basically creates a new browser window which we can control pragmatically. It also let us capture the user events like click and scroll.

Selenium is one such webdriver.

Selenium Webdriver

Selenium webdriver accepts cthe ommand and sends them to ba rowser and retrieves results.

You can install selenium in your system using fthe ollowing simple command:

$ sudo pip install selenium

In order to use we need to import selenium in our Python script.

from selenium import webdriver

I am using Firefox webdriver in this tutorial. Now we are ready to extract our webpage and we can do this by using fthe ollowing:

self.url = 'https://www.botreetechnologies.com/'

self.browser = webdriver.Firefox()

Now we need to click on ‘CASE-STUDIES’ to open that page.

We can click on a selenium element by using following piece of code:

self.browser.find_element_by_xpath('//div[contains(@id,'navbar')]/ul[2]/li[1]').click()

Now we are transferred to case-studies page and here all the case studies are listed with some information.

Here, I want to click on each case study and open details page to extract all available information.

So, I created a list of links for all case studies and load them one after the other.

To load previous page you can use following piece of code:

self.browser.execute_script('window.history.go(-1)')

Final script for using Selenium will looks as under:

And we are done, Now you can extract static webpages or interact with webpages using the above script.

Conclusion: Web Scraping Python is an essential Skill to have

Today, more than ever, companies are working with huge amounts of data. Learning how to scrape data in Python web scraping projects will take you a long way. In this tutorial, you learn Python web scraping with beautiful soup.

Along with that, Python web scraping with selenium is also a useful skill. Companies need data engineers who can extract data and deliver it to them for gathering useful insights. You have a high chance of success in data extraction if you are working on Python web scraping projects.

If you want to hire Python developers for web scraping, then contact BoTree Technologies. We have a team of engineers who are experts in web scraping. Give us a call today.

Consulting is free – let us help you grow!

Internet extends fast and modern websites pretty often use dynamic content load mechanisms to provide the best user experience. Still, on the other hand, it becomes harder to extract data from such web pages, as it requires the execution of internal Javascript in the page context while scraping. Let's review several conventional techniques that allow data extraction from dynamic websites using Python.

What is a dynamic website?#

A dynamic website is a type of website that can update or load content after the initial HTML load. So the browser receives basic HTML with JS and then loads content using received Javascript code. Such an approach allows increasing page load speed and prevents reloading the same layout each time you'd like to open a new page.

Usually, dynamic websites use AJAX to load content dynamically, or even the whole site is based on a Single-Page Application (SPA) technology.

In contrast to dynamic websites, we can observe static websites containing all the requested content on the page load.

A great example of a static website is example.com:

The whole content of this website is loaded as a plain HTML while the initial page load.

To demonstrate the basic idea of a dynamic website, we can create a web page that contains dynamically rendered text. It will not include any request to get information, just a render of a different HTML after the page load:

All we have here is an HTML file with a single <div> in the body that contains text - Web Scraping is hard, but after the page load, that text is replaced with the text generated by the Javascript:

To prove this, let's open this page in the browser and observe a dynamically replaced text:

Alright, so the browser displays a text, and HTML tags wrap this text.

Can't we use BeautifulSoup or LXML to parse it? Let's find out.

Extract data from a dynamic web page#

BeautifulSoup is one of the most popular Python libraries across the Internet for HTML parsing. Almost 80% of web scraping Python tutorials use this library to extract required content from the HTML.

Let's use BeautifulSoup for extracting the text inside <div> from our sample above.

This code snippet uses os library to open our test HTML file (test.html) from the local directory and creates an instance of the BeautifulSoup library stored in soup variable. Using the soup we find the tag with id test and extracts text from it.

In the screenshot from the first article part, we've seen that the content of the test page is I ❤️ ScrapingAnt, but the code snippet output is the following:

And the result is different from our expectation (except you've already found out what is going on there). Everything is correct from the BeautifulSoup perspective - it parsed the data from the provided HTML file, but we want to get the same result as the browser renders. The reason is in the dynamic Javascript that not been executed during HTML parsing.

We need the HTML to be run in a browser to see the correct values and then be able to capture those values programmatically.

Below you can find four different ways to execute dynamic website's Javascript and provide valid data for an HTML parser: Selenium, Pyppeteer, Playwright, and Web Scraping API.

Selenuim: web scraping with a webdriver#

Selenium is one of the most popular web browser automation tools for Python. It allows communication with different web browsers by using a special connector - a webdriver.

To use Selenium with Chrome/Chromium, we'll need to download webdriver from the repository and place it into the project folder. Don't forget to install Selenium itself by executing:

Selenium instantiating and scraping flow is the following:

- define and setup Chrome path variable

- define and setup Chrome webdriver path variable

- define browser launch arguments (to use headless mode, proxy, etc.)

- instantiate a webdriver with defined above options

- load a webpage via instantiated webdriver

In the code perspective, it looks the following:

Selenium Python Web Scraping Tutorial

And finally, we'll receive the required result:

Selenium usage for dynamic website scraping with Python is not complicated and allows you to choose a specific browser with its version but consists of several moving components that should be maintained. The code itself contains some boilerplate parts like the setup of the browser, webdriver, etc.

I like to use Selenium for my web scraping project, but you can find easier ways to extract data from dynamic web pages below.

Pyppeteer: Python headless Chrome#

Pyppeteer is an unofficial Python port of Puppeteer JavaScript (headless) Chrome/Chromium browser automation library. It is capable of mainly doing the same as Puppeteer can, but using Python instead of NodeJS.

Puppeteer is a high-level API to control headless Chrome, so it allows you to automate actions you're doing manually with the browser: copy page's text, download images, save page as HTML, PDF, etc.

To install Pyppeteer you can execute the following command:

The usage of Pyppeteer for our needs is much simpler than Selenium:

I've tried to comment on every atomic part of the code for a better understanding. However, generally, we've just opened a browser page, loaded a local HTML file into it, and extracted the final rendered HTML for further BeautifulSoup processing.

As we can expect, the result is the following:

We did it again and not worried about finding, downloading, and connecting webdriver to a browser. Though, Pyppeteer looks abandoned and not properly maintained. This situation may change in the nearest future, but I'd suggest looking at the more powerful library.

Playwright: Chromium, Firefox and Webkit browser automation#

Playwright can be considered as an extended Puppeteer, as it allows using more browser types (Chromium, Firefox, and Webkit) to automate modern web app testing and scraping. You can use Playwright API in JavaScript & TypeScript, Python, C# and, Java. And it's excellent, as the original Playwright maintainers support Python.

The API is almost the same as for Pyppeteer, but have sync and async version both.

Installation is simple as always:

Selenium Python Web Scraping 101

Let's rewrite the previous example using Playwright.

As a good tradition, we can observe our beloved output:

We've gone through several different data extraction methods with Python, but is there any more straightforward way to implement this job? How can we scale our solution and scrape data with several threads?

Meet the web scraping API!

Web Scraping API#

ScrapingAnt web scraping API provides an ability to scrape dynamic websites with only a single API call. It already handles headless Chrome and rotating proxies, so the response provided will already consist of Javascript rendered content. ScrapingAnt's proxy poll prevents blocking and provides a constant and high data extraction success rate.

Usage of web scraping API is the simplest option and requires only basic programming skills.

Web Scraping Using Selenium Python

You do not need to maintain the browser, library, proxies, webdrivers, or every other aspect of web scraper and focus on the most exciting part of the work - data analysis.

As the web scraping API runs on the cloud servers, we have to serve our file somewhere to test it. I've created a repository with a single file: https://github.com/kami4ka/dynamic-website-example/blob/main/index.html

To check it out as HTML, we can use another great tool: HTMLPreview

The final test URL to scrape a dynamic web data has a following look: http://htmlpreview.github.io/?https://github.com/kami4ka/dynamic-website-example/blob/main/index.html

The scraping code itself is the simplest one across all four described libraries. We'll use ScrapingAntClient library to access the web scraping API.

Let's install in first:

And use the installed library:

To get you API token, please, visit Login page to authorize in ScrapingAnt User panel. It's free.

And the result is still the required one.

All the headless browser magic happens in the cloud, so you need to make an API call to get the result.

Check out the documentation for more info about ScrapingAnt API.

Summary#

Today we've checked four free tools that allow scraping dynamic websites with Python. All these libraries use a headless browser (or API with a headless browser) under the hood to correctly render the internal Javascript inside an HTML page. Below you can find links to find out more information about those tools and choose the handiest one:

Happy web scraping, and don't forget to use proxies to avoid blocking 🚀